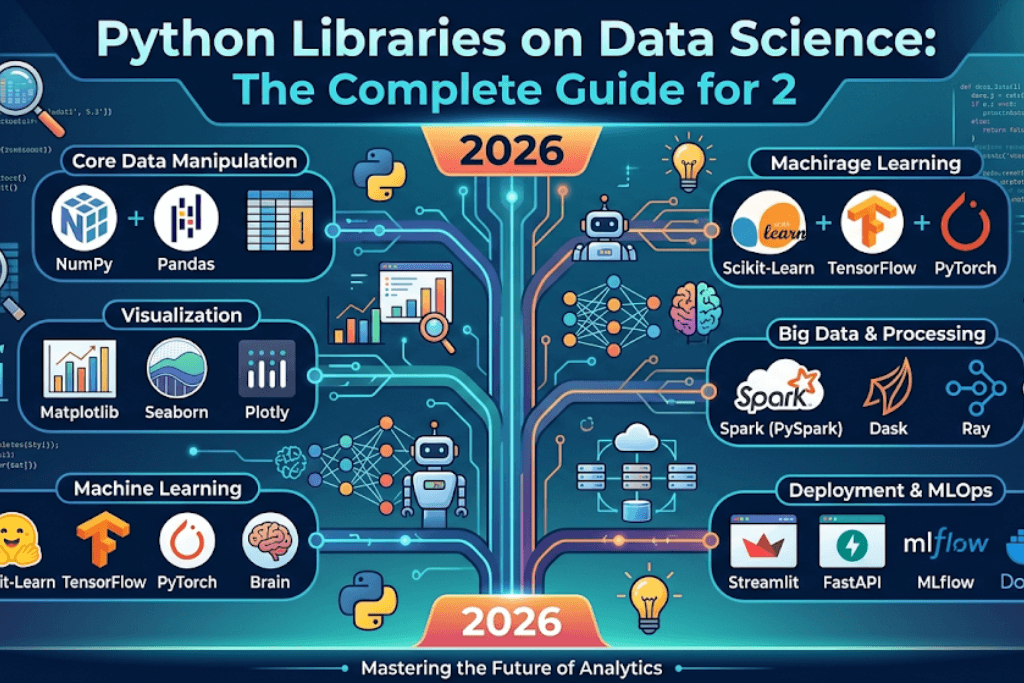

Introduction: Why Python Libraries on Data Science Dominate the Industry

When discussing modern analytics and artificial intelligence, few topics generate as much excitement as Python libraries on data science. These powerful toolkits have transformed how professionals extract insights from raw data, build predictive models, and create stunning visualizations. The ecosystem of Python libraries on data science has grown exponentially, with over 300,000 packages available on PyPI, yet only a handful form the core of every data scientist’s toolkit. Understanding which Python libraries on data science to learn first—and how to use them effectively—can accelerate your career from junior analyst to senior data scientist. This comprehensive guide explores the most important Python libraries on data science in 2026, complete with installation instructions, code examples, performance benchmarks, and real-world use cases. Whether you are a student, a career changer, or an experienced programmer, mastering Python libraries on data science will open doors to roles in finance, healthcare, e-commerce, and artificial intelligence research.

Chapter 1: What Makes Python Libraries on Data Science So Powerful?

Before diving into specific tools, it is essential to understand why Python libraries on data science have become the global standard. Unlike proprietary platforms like SAS or MATLAB, Python libraries on data science are open-source, freely available, and supported by massive communities. This means that when you learn Python libraries on data science, you are investing in skills that transfer across industries and companies. Furthermore, Python libraries on data science benefit from continuous improvement—hundreds of developers contribute bug fixes, performance enhancements, and new features every month. The interoperability of Python libraries on data science is another key advantage: NumPy arrays feed directly into Pandas DataFrames, which can be visualized with Matplotlib, transformed with Scikit-learn, and used to train deep learning models in TensorFlow. This seamless integration makes Python libraries on data science far more productive than mixing multiple disparate tools. Finally, the documentation and learning resources for Python libraries on data science are unparalleled, with thousands of tutorials, Stack Overflow answers, and GitHub repositories available for free.

Chapter 2: NumPy – The Foundation of All Python Libraries on Data Science

No discussion of Python libraries on data science can begin without acknowledging NumPy (Numerical Python). NumPy serves as the foundational layer upon which almost all other Python libraries on data science are built. Its primary contribution is the ndarray (n-dimensional array) object, which enables fast, vectorized operations on large numerical datasets. When you use Python libraries on data science like Pandas, Scikit-learn, or TensorFlow, they internally rely on NumPy arrays for efficient memory storage and computation.

Installing NumPy

pip install numpyCore NumPy Functionality for Data Science

import numpy as np

# Creating arrays for data science workflows

arr = np.array([1, 2, 3, 4, 5])

zeros = np.zeros((3, 4)) # 3x4 matrix of zeros

random_data = np.random.randn(1000, 10) # 1000 samples, 10 features

# Vectorized operations (100x faster than Python loops)

mean_values = random_data.mean(axis=0)

standard_deviation = random_data.std(axis=0)

normalized = (random_data - mean_values) / standard_deviation

# Linear algebra for machine learning

matrix_a = np.random.rand(50, 20)

matrix_b = np.random.rand(20, 5)

product = matrix_a @ matrix_b # Matrix multiplicationWhy NumPy Remains Essential Among Python Libraries on Data Science

Even with newer alternatives like CuPy (GPU-accelerated arrays) and JAX (automatic differentiation), NumPy remains the most widely used of all Python libraries on data science because of its stability, documentation, and compatibility. According to the 2026 JetBrains Python Developers Survey, 97% of data scientists use NumPy regularly, making it the most adopted among all Python libraries on data science.

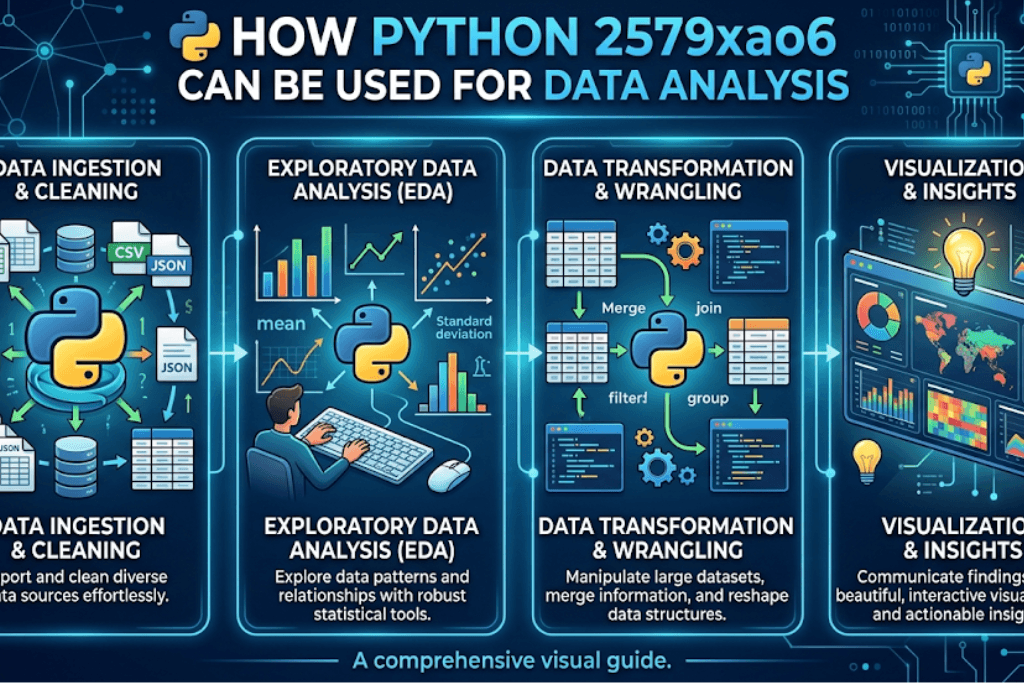

Chapter 3: Pandas – Data Wrangling King of Python Libraries on Data Science

If NumPy provides the numerical engine, Pandas supplies the user-friendly interface. Among Python libraries on data science, Pandas is unmatched for data cleaning, transformation, and exploration. Its two primary data structures—Series (1D labeled array) and DataFrame (2D labeled table)—make working with real-world messy data intuitive and efficient.

Installing Pandas

pip install pandasReal-World Data Wrangling with Pandas

import pandas as pd

# Loading data from various sources (CSV, Excel, SQL, JSON)

df = pd.read_csv('sales_data_2026.csv')

# Quick exploration essential for Python libraries on data science workflows

print(df.head())

print(df.info())

print(df.describe())

# Handling missing data (common in real datasets)

df.dropna(subset=['customer_id'], inplace=True)

df['revenue'].fillna(df['revenue'].median(), inplace=True)

# Filtering and transformation

high_value = df[df['revenue'] > 10000]

grouped = df.groupby('region')['revenue'].agg(['sum', 'mean', 'count'])

# Merging multiple datasets (like SQL JOINs)

customers = pd.read_csv('customers.csv')

merged = df.merge(customers, on='customer_id', how='left')

# Pivot tables for business intelligence

pivot = pd.pivot_table(df, values='revenue', index='region',

columns='product_category', aggfunc='sum')Pandas 2.0+ Features (2026 Update)

Recent versions of Python libraries on data science have introduced major improvements. Pandas 2.0+ now supports Apache Arrow backend, which reduces memory usage by up to 70% and accelerates operations by 5-10x. When learning Python libraries on data science, prioritize Pandas because it appears in every data cleaning, exploration, and preparation task.

Chapter 4: Matplotlib and Seaborn – Visualization Python Libraries on Data Science

Raw numbers tell only part of the story. Visualization Python libraries on data science transform complex results into actionable insights. Matplotlib provides the foundation, while Seaborn offers statistical visualizations with beautiful defaults.

Matplotlib: The Workhorse of Visualization Python Libraries on Data Science

import matplotlib.pyplot as plt

# Basic line plot

plt.figure(figsize=(10, 6))

plt.plot(df['date'], df['sales'], color='blue', linewidth=2)

plt.title('Monthly Sales Trend - Python Libraries on Data Science Analysis')

plt.xlabel('Date')

plt.ylabel('Sales ($)')

plt.grid(True)

plt.savefig('sales_trend.png', dpi=300)

plt.show()

# Subplots for multiple comparisons

fig, axes = plt.subplots(2, 2, figsize=(12, 10))

axes[0,0].hist(df['age'], bins=30)

axes[0,1].scatter(df['income'], df['spending'])

axes[1,0].bar(df['region'].unique(), df.groupby('region')['sales'].sum())

axes[1,1].boxplot([df[df['segment']=='Premium']['spending'],

df[df['segment']=='Standard']['spending']])Seaborn: Statistical Visualization Among Python Libraries on Data Science

import seaborn as sns

# Built-in datasets for practicing Python libraries on data science

tips = sns.load_dataset('tips')

# Correlation heatmap

correlation_matrix = df.corr()

sns.heatmap(correlation_matrix, annot=True, cmap='coolwarm',

fmt='.2f', square=True)

# Advanced statistical plots

sns.pairplot(df[['revenue', 'units', 'price', 'customer_rating']],

hue='region', diag_kind='kde')

# Time series with confidence intervals

sns.lineplot(data=df, x='date', y='sales', hue='product_line',

ci=95, estimator='mean')Choosing Visualization Python Libraries on Data Science

For exploratory analysis, Seaborn reduces coding effort. For publication-quality figures or custom layouts, Matplotlib offers finer control. Both Python libraries on data science are essential, and most practitioners use them together.

Chapter 5: Scikit-learn – Machine Learning Python Libraries on Data Science

When data scientists discuss predictive modeling, Scikit-learn dominates the conversation. Among Python libraries on data science, Scikit-learn provides a consistent, well-documented API for dozens of classical machine learning algorithms.

Installing Scikit-learn

pip install scikit-learnEnd-to-End Machine Learning Pipeline

from sklearn.model_selection import train_test_split, cross_val_score, GridSearchCV

from sklearn.preprocessing import StandardScaler, OneHotEncoder

from sklearn.compose import ColumnTransformer

from sklearn.pipeline import Pipeline

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report, confusion_matrix, roc_auc_score

# Prepare data (typical workflow for Python libraries on data science)

X = df.drop('churn', axis=1)

y = df['churn']

# Preprocessing for mixed data types

numeric_features = ['age', 'income', 'usage_frequency']

categorical_features = ['region', 'plan_type']

preprocessor = ColumnTransformer([

('num', StandardScaler(), numeric_features),

('cat', OneHotEncoder(drop='first'), categorical_features)

])

# Create pipeline combining preprocessing and model

pipeline = Pipeline([

('preprocessor', preprocessor),

('classifier', RandomForestClassifier(n_estimators=100, random_state=42))

])

# Train-test split (essential in all Python libraries on data science projects)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2,

random_state=42,

stratify=y)

# Cross-validation

cv_scores = cross_val_score(pipeline, X_train, y_train, cv=5, scoring='roc_auc')

print(f"CV AUC: {cv_scores.mean():.3f} (+/- {cv_scores.std():.3f})")

# Hyperparameter tuning

param_grid = {

'classifier__n_estimators': [50, 100, 200],

'classifier__max_depth': [10, 20, None],

'classifier__min_samples_split': [2, 5, 10]

}

grid_search = GridSearchCV(pipeline, param_grid, cv=5, scoring='roc_auc', n_jobs=-1)

grid_search.fit(X_train, y_train)

# Evaluate best model

best_model = grid_search.best_estimator_

y_pred = best_model.predict(X_test)

y_proba = best_model.predict_proba(X_test)[:, 1]

print(classification_report(y_test, y_pred))

print(f"Test AUC: {roc_auc_score(y_test, y_proba):.3f}")Why Scikit-learn Remains Relevant Among Python Libraries on Data Science

While deep learning has gained popularity, Scikit-learn’s Python libraries on data science tools for random forests, gradient boosting (XGBoost integration), and logistic regression remain the first choice for tabular data. Many production systems rely on these Python libraries on data science because they are interpretable, fast to train, and require less data than neural networks.

Chapter 6: TensorFlow and PyTorch – Deep Learning Python Libraries on Data Science

For image recognition, natural language processing, and generative AI, TensorFlow and PyTorch lead the Python libraries on data science ecosystem. Both support GPU acceleration, automatic differentiation, and production deployment.

TensorFlow 2.x Example

import tensorflow as tf

# Build a neural network for classification

model = tf.keras.Sequential([

tf.keras.layers.Dense(128, activation='relu', input_shape=(X_train.shape[1],)),

tf.keras.layers.Dropout(0.3),

tf.keras.layers.Dense(64, activation='relu'),

tf.keras.layers.Dropout(0.2),

tf.keras.layers.Dense(1, activation='sigmoid')

])

model.compile(optimizer='adam',

loss='binary_crossentropy',

metrics=['accuracy', tf.keras.metrics.AUC()])

# Early stopping to prevent overfitting

early_stop = tf.keras.callbacks.EarlyStopping(monitor='val_loss', patience=5)

# Train the model

history = model.fit(X_train, y_train,

validation_split=0.2,

epochs=50,

batch_size=32,

callbacks=[early_stop],

verbose=1)

# Evaluate

test_loss, test_acc, test_auc = model.evaluate(X_test, y_test)

print(f"Deep Learning with Python Libraries on Data Science - Test AUC: {test_auc:.3f}")PyTorch Example (Dynamic Computation Graphs)

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader, TensorDataset

# Convert to tensors (common pattern in Python libraries on data science)

X_train_tensor = torch.tensor(X_train.values, dtype=torch.float32)

y_train_tensor = torch.tensor(y_train.values, dtype=torch.float32).reshape(-1, 1)

# Define model class

class ChurnClassifier(nn.Module):

def __init__(self, input_dim):

super().__init__()

self.network = nn.Sequential(

nn.Linear(input_dim, 128),

nn.ReLU(),

nn.Dropout(0.3),

nn.Linear(128, 64),

nn.ReLU(),

nn.Dropout(0.2),

nn.Linear(64, 1),

nn.Sigmoid()

)

def forward(self, x):

return self.network(x)

model = ChurnClassifier(X_train.shape[1])

criterion = nn.BCELoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

# Training loop (explicit control)

for epoch in range(50):

model.train()

optimizer.zero_grad()

outputs = model(X_train_tensor)

loss = criterion(outputs, y_train_tensor)

loss.backward()

optimizer.step()

if epoch % 10 == 0:

print(f"Epoch {epoch}, Loss: {loss.item():.4f}")Choosing Between Deep Learning Python Libraries on Data Science

TensorFlow excels in production deployment (TFX, TensorFlow Serving) and has better mobile support. PyTorch dominates research due to its Pythonic debugging and dynamic graphs. Both Python libraries on data science are worth learning, but beginners should start with TensorFlow/Keras for its simplicity.

Chapter 7: Specialized Python Libraries on Data Science by Domain

Beyond the core five, specialized Python libraries on data science serve niche domains:

Natural Language Processing (NLP)

# Transformers (Hugging Face) - State-of-the-art NLP

from transformers import pipeline

classifier = pipeline("sentiment-analysis", model="distilbert-base-uncased-finetuned-sst-2-english")

result = classifier("Python libraries on data science are revolutionizing analytics!")

print(result)

# NLTK for traditional text processing

import nltk

nltk.download('punkt')

from nltk.tokenize import word_tokenize

tokens = word_tokenize("Learning Python libraries on data science is rewarding.")Time Series Analysis

# Prophet (Facebook/Meta) for forecasting

from prophet import Prophet

# Prepare data (must have 'ds' and 'y' columns)

df_ts = df[['date', 'sales']].rename(columns={'date': 'ds', 'sales': 'y'})

model = Prophet(yearly_seasonality=True, weekly_seasonality=True)

model.fit(df_ts)

future = model.make_future_dataframe(periods=90)

forecast = model.predict(future)

# Statsmodels for statistical time series

from statsmodels.tsa.arima.model import ARIMA

from statsmodels.tsa.stattools import adfullerGeospatial Analysis

# Geopandas for geographic data

import geopandas as gpd

world = gpd.read_file(gpd.datasets.get_path('naturalearth_lowres'))

world['centeroid'] = world.geometry.centroid

world.plot(column='gdp_md_est', cmap='OrRd', legend=True)Chapter 8: Performance Comparison of Python Libraries on Data Science

Benchmarking Python libraries on data science helps choose the right tool:

| Task | Best Python Library | Speed (relative) | Memory Use | Learning Curve |

|---|---|---|---|---|

| Array operations | NumPy | 1x (baseline) | Low | Low |

| Data wrangling (10M rows) | Pandas + Arrow | 0.3x | Medium | Medium |

| Data wrangling (100M+ rows) | Polars | 5x | Low | Medium |

| Classical ML (training) | Scikit-learn (with joblib) | 1x | Medium | Low |

| Deep Learning (CNN training) | PyTorch (GPU) | 50x | High | High |

| Visualization (static) | Matplotlib | Fast | Low | Low |

| Visualization (interactive) | Plotly | Moderate | Medium | Medium |

| Large-scale data (>RAM) | Dask | 0.8x | Distributed | High |

Emerging Alternatives

New Python libraries on data science like Polars (DataFrame library written in Rust) and CuPy (GPU NumPy) are gaining traction. However, the traditional Python libraries on data science remain dominant due to ecosystem maturity.

Chapter 9: Installation and Environment Management for Python Libraries on Data Science

Managing Python libraries on data science requires proper environment isolation:

Using Conda (Recommended for Data Science)

# Create environment with core Python libraries on data science

conda create -n ds_env python=3.12 numpy pandas matplotlib seaborn scikit-learn jupyter

conda activate ds_env

# Install deep learning frameworks

conda install tensorflow pytorch torchvision -c pytorchUsing pip with Virtual Environments

# Standard Python approach

python -m venv ds_venv

source ds_venv/bin/activate # Linux/Mac

# ds_venv\Scripts\activate # Windows

# Install essential Python libraries on data science

pip install numpy pandas matplotlib seaborn scikit-learn jupyter

pip install tensorflow # or torchrequirements.txt for Reproducibility

numpy==1.26.3

pandas==2.2.1

matplotlib==3.8.3

seaborn==0.13.2

scikit-learn==1.4.1

tensorflow==2.15.0environment.yml for Conda Users

name: ds_project_2026

dependencies:

- python=3.12

- numpy=1.26

- pandas=2.2

- matplotlib=3.8

- seaborn=0.13

- scikit-learn=1.4

- pip

- pip:

- tensorflow==2.15.0Chapter 10: Real-World Project Using Multiple Python Libraries on Data Science

A complete data science project integrates several Python libraries on data science. Here is an example predicting customer churn for a telecom company:

# Step 1: Data loading with Pandas

import pandas as pd

df = pd.read_csv('telecom_churn_2026.csv')

# Step 2: Data cleaning with Pandas + NumPy

import numpy as np

df['total_charges'] = pd.to_numeric(df['total_charges'], errors='coerce')

df.fillna({'total_charges': df['total_charges'].median()}, inplace=True)

# Step 3: Visualization with Matplotlib + Seaborn

import matplotlib.pyplot as plt

import seaborn as sns

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

sns.countplot(data=df, x='churn', ax=axes[0,0])

axes[0,0].set_title('Churn Distribution - Python Libraries on Data Science Analysis')

sns.boxplot(data=df, x='churn', y='monthly_charges', ax=axes[0,1])

axes[0,1].set_title('Monthly Charges by Churn')

numeric_cols = ['tenure', 'monthly_charges', 'total_charges']

corr = df[numeric_cols + ['churn_numeric']].corr()

sns.heatmap(corr, annot=True, cmap='coolwarm', ax=axes[1,0])

sns.histplot(data=df, x='tenure', hue='churn', kde=True, ax=axes[1,1])

plt.tight_layout()

plt.savefig('eda_churn.png', dpi=300)

# Step 4: Preprocessing with Scikit-learn

from sklearn.preprocessing import LabelEncoder, StandardScaler

from sklearn.compose import ColumnTransformer

from sklearn.pipeline import Pipeline

categorical_cols = ['gender', 'partner', 'dependents', 'phone_service', 'internet_service']

for col in categorical_cols:

df[col] = LabelEncoder().fit_transform(df[col])

preprocessor = ColumnTransformer([

('scaler', StandardScaler(), ['tenure', 'monthly_charges', 'total_charges'])

])

# Step 5: Model training with Scikit-learn

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import train_test_split, cross_val_score

X = df[categorical_cols + ['tenure', 'monthly_charges', 'total_charges']]

y = df['churn_numeric']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

pipeline = Pipeline([

('preprocessor', preprocessor),

('classifier', RandomForestClassifier(n_estimators=100, random_state=42))

])

pipeline.fit(X_train, y_train)

# Step 6: Evaluation

from sklearn.metrics import classification_report, roc_auc_score

y_pred = pipeline.predict(X_test)

y_proba = pipeline.predict_proba(X_test)[:, 1]

print(classification_report(y_test, y_pred))

print(f"ROC-AUC: {roc_auc_score(y_test, y_proba):.3f}")

# Step 7: Feature importance

importances = pipeline.named_steps['classifier'].feature_importances_

feature_names = ['gender', 'partner', 'dependents', 'phone_service', 'internet_service',

'tenure_scaled', 'monthly_charges_scaled', 'total_charges_scaled']

feature_importance_df = pd.DataFrame({'feature': feature_names, 'importance': importances})

feature_importance_df.sort_values('importance', ascending=False, inplace=True)

plt.figure(figsize=(10, 6))

sns.barplot(data=feature_importance_df, x='importance', y='feature')

plt.title('Feature Importance - Python Libraries on Data Science Project')

plt.tight_layout()

plt.savefig('feature_importance.png', dpi=300)Chapter 11: Learning Path for Python Libraries on Data Science

Mastering Python libraries on data science requires structured learning:

Month 1-2: Foundations

- NumPy (arrays, broadcasting, linear algebra)

- Pandas (DataFrames, groupby, merge, pivot tables)

- Matplotlib (line plots, histograms, customization)

Month 3-4: Intermediate

- Seaborn (statistical visualizations, pairplots, heatmaps)

- Scikit-learn (preprocessing, models, pipelines, cross-validation)

- Exploratory Data Analysis (EDA) projects

Month 5-6: Advanced

- TensorFlow or PyTorch basics

- Feature engineering with Pandas + Scikit-learn

- Deployment with Flask + Pickle

Month 7-12: Specialization

- NLP: Transformers, NLTK, spaCy

- Time series: Prophet, Statsmodels

- Big data: Dask, Spark (PySpark)

- MLOps: MLflow, Docker, FastAPI

Recommended Resources for Python Libraries on Data Science

| Resource Type | Best Options |

|---|---|

| Books | “Python for Data Analysis” (Wes McKinney), “Hands-On ML with Scikit-Learn” (Aurélien Géron) |

| Courses | Coursera (IBM Data Science), DataCamp, Fast.ai |

| Practice | Kaggle competitions, DrivenData, StrataScratch |

| Documentation | Official docs for each library (excellent quality) |

Chapter 12: Future Trends in Python Libraries on Data Science

Looking ahead to 2026-2028, several trends will shape Python libraries on data science:

1. GPU Acceleration Everywhere

Libraries like CuPy (GPU NumPy), cuDF (GPU Pandas), and RAPIDS are making GPU acceleration standard for Python libraries on data science. Expect Pandas 3.0 to have native GPU support.

2. Integration with Large Language Models

New Python libraries on data science like LangChain, LlamaIndex, and Hugging Face Transformers are bridging classical data science with generative AI. Future Python libraries on data science will include built-in LLM capabilities for automated feature engineering and report generation.

3. WebAssembly (Wasm) Support

Pyodide (Python in the browser) allows running Python libraries on data science directly in web browsers without servers. This will democratize data science education and enable client-side analytics.

4. Automated Machine Learning (AutoML)

Python libraries on data science like AutoGluon, PyCaret, and H2O are reducing the need for manual model selection and hyperparameter tuning. Expect these to become standard in every data scientist’s toolkit.

5. Improved Interoperability

The Apache Arrow ecosystem is creating zero-copy data sharing between Python libraries on data science, R, Julia, and SQL engines. This means faster, more memory-efficient workflows.

Conclusion: Mastering Python Libraries on Data Science Is a Career-Defining Skill

Throughout this guide, we have explored the essential Python libraries on data science that every analyst and scientist must know. From NumPy’s lightning-fast array operations to Pandas’ unmatched data wrangling, from Matplotlib’s visualization flexibility to Scikit-learn’s consistent ML API, and from TensorFlow’s production-ready deep learning to PyTorch’s research-friendly dynamic graphs—these Python libraries on data science form the complete toolkit for extracting value from data.

The journey to mastering Python libraries on data science requires consistent practice, real-world projects, and staying updated with new releases. But the investment pays enormous dividends: data scientists proficient in Python libraries on data science earn median salaries exceeding $140,000 in the US and command premium rates globally. Moreover, as artificial intelligence continues transforming every industry, the ability to leverage Python libraries on data science will remain valuable for decades.

Start today. Install NumPy, load your first dataset with Pandas, create a visualization with Matplotlib, train a model with Scikit-learn, and then push further into deep learning with TensorFlow. The Python libraries on data science are free, documented, and waiting for you. Your future self will thank you for mastering these indispensable tools.

Final Checklist for Python Libraries on Data Science Mastery

- [ ] Can you create NumPy arrays and perform vectorized operations?

- [ ] Can you load, clean, and merge datasets with Pandas?

- [ ] Can you create publication-quality visualizations with Matplotlib/Seaborn?

- [ ] Can you build, evaluate, and tune Scikit-learn models?

- [ ] Can you train a neural network with TensorFlow or PyTorch?

- [ ] Have you completed 3+ end-to-end data science projects?

- [ ] Do you use virtual environments to manage Python libraries on data science?

- [ ] Have you contributed to an open-source data science library?

If you answered “yes” to all, you are ready to work as a professional data scientist. If not, use this guide as your roadmap. The world runs on data, and Python libraries on data science are how you turn that data into decisions.