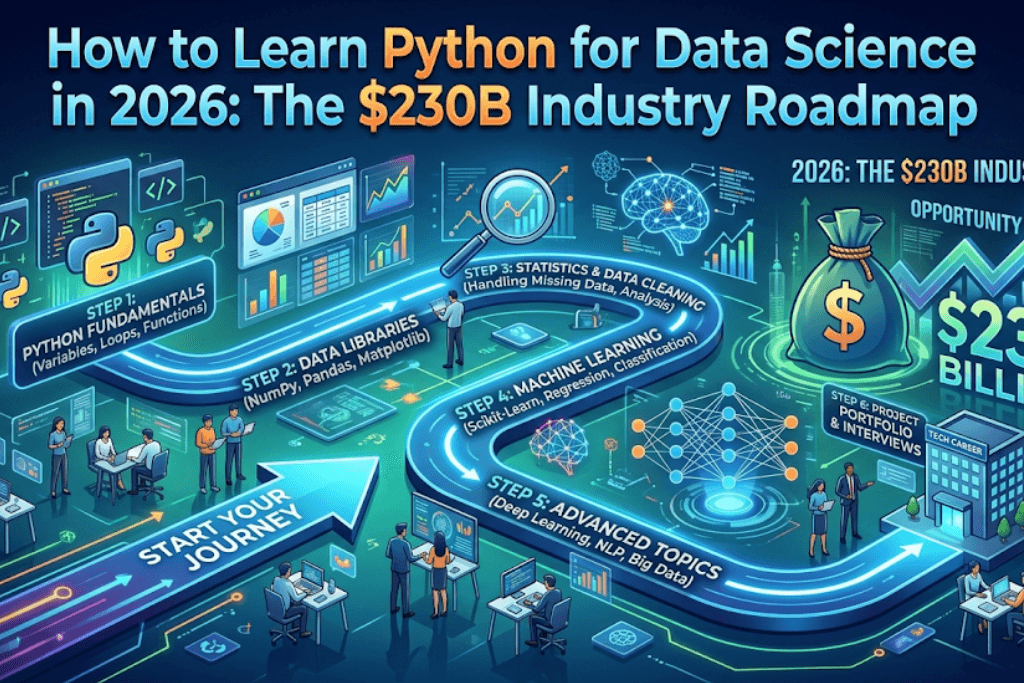

Introduction: Why You Must Learn Python for Data Science in 2026

The global data science market is projected to surpass $230 billion by 2026, growing at a compound annual rate of nearly 30%. At the heart of this explosion sits one programming language: Python. If you want to learn Python for data science, now is the most strategic moment in history. Companies from JPMorgan to Netflix are racing to hire professionals who can manipulate data, build predictive models, and deploy AI solutions. But why Python? Because it bridges the gap between statistical analysis and production-ready machine learning. This 3000-word roadmap will show you exactly how to learn Python for data science systematically, avoid common pitfalls, and position yourself inside a $230B industry. Whether you are a beginner or a professional looking to pivot, by the end of this guide, you will have a clear, actionable plan to master Python for data science by 2026.

Chapter 1: The $230B Data Science Industry – Why Python Dominates

Before you write a single line of code, understand the economic forces at play. The $230 billion valuation for data science by 2026 isn’t hype—it’s the result of every sector digitizing. Healthcare, finance, retail, logistics, and energy all generate petabytes of data daily. To extract value, they need analysts and scientists who learn Python for data science because Python offers unparalleled libraries (pandas, scikit-learn, TensorFlow) and a massive community. According to IBM, demand for data scientists will grow by 28% by 2026, and 85% of job postings explicitly request Python. Unlike R or Julia, Python integrates seamlessly with cloud platforms (AWS, Azure, GCP) and big data tools (Spark, Dask). So, when you learn Python for data science, you are not just learning a language; you are adopting the lingua franca of the data economy. Employers are willing to pay six-figure salaries for practitioners who can move from a Jupyter notebook to a production API. The roadmap ahead will ensure you become one of them.

Chapter 2: Setting Up Your Environment to Learn Python for Data Science

To learn Python for data science efficiently, you need the right toolkit. Forget generic IDEs like PyCharm for now. Start with Anaconda Distribution, which bundles Python, conda, and 250+ scientific packages. Install Jupyter Notebook or JupyterLab—they are non-negotiable for exploratory analysis. Alternatively, VS Code with the Python and Jupyter extensions works beautifully. The key is to separate environments: create a dedicated conda environment for data science (conda create -n ds-env python=3.12). Then install core libraries: numpy, pandas, matplotlib, seaborn, scikit-learn, jupyter. Why this matters? When you learn Python for data science, version conflicts can derail weeks of progress. Using environments isolates your projects. Also, set up Git and GitHub from day one. Version control is not optional in a $230B industry—collaboration is everything. Finally, practice using the terminal: pip install, conda list, python -m venv. These small habits separate hobbyists from professionals. Once your environment is stable, you are ready to dive into data manipulation.

Chapter 3: Python Basics – The Foundation You Must Master

Many beginners rush to machine learning before understanding Python fundamentals. That is a mistake. To learn Python for data science correctly, spend 2–4 weeks on core syntax: variables, data types (int, float, string, bool), lists, tuples, dictionaries, sets, loops (for, while), conditionals (if-elif-else), functions, lambda expressions, list comprehensions, and error handling (try-except). Why are these essential? Data cleaning requires looping through rows; feature engineering uses dictionary mappings; model evaluation relies on functions. For example, when you learn Python for data science, you will constantly use try-except to handle missing values without crashing your pipeline. Practice on platforms like HackerRank or LeetCode (easy difficulty). But do not over-index on algorithms—data science is not software engineering. Focus on string manipulation, date parsing, and nested data structures. A great exercise: given a list of sales dictionaries, compute total revenue per region using a function and list comprehension. Master these basics, and pandas will feel intuitive. Otherwise, you will struggle with indexing and apply functions. Remember: every $230B insight starts with clean, reliable code.

Chapter 4: NumPy – The Engine of Numerical Computing

Once comfortable with Python, your next step to learn Python for data science is NumPy (Numerical Python). NumPy provides N-dimensional arrays and vectorized operations. Why not use Python lists? Because a NumPy array processes operations 50x faster, critical for large datasets. When you learn Python for data science, you will use NumPy for: linear algebra (dot products, matrix inverses), random number generation (for simulations), broadcasting (operating on arrays of different shapes), and statistical functions (mean, median, std). Spend one week mastering: array creation (np.array(), np.zeros(), np.arange()), indexing and slicing, reshaping (reshape(), flatten()), concatenation (np.vstack(), np.hstack()), universal functions (np.exp(), np.log(), np.sqrt()), and aggregation (np.sum(), np.cumsum()). A real-world example: image processing represents pictures as 3D NumPy arrays (height, width, channels). To learn Python for data science practically, take a CSV of stock prices, convert it to a NumPy array, compute daily returns using vectorized operations, then find the Sharpe ratio. This exercise builds muscle memory. NumPy is also the foundation for pandas and scikit-learn, so do not skip it.

Chapter 5: Pandas – Data Wrangling for the $230B Industry

If NumPy is the engine, pandas is the steering wheel. To learn Python for data science professionally, pandas is non-negotiable. Over 70% of a data scientist’s time is spent cleaning and transforming data—pandas makes it bearable. Pandas introduces two core structures: Series (1D labeled array) and DataFrame (2D table with rows and columns). When you learn Python for data science, focus on these operations: reading/writing data (pd.read_csv(), df.to_csv()), inspecting (df.head(), df.info(), df.describe()), selecting columns (df['col']), filtering rows (df[df['price'] > 100]), handling missing values (df.dropna(), df.fillna()), grouping (df.groupby().agg()), merging (pd.merge(), pd.concat()), pivoting (df.pivot_table()), and applying functions (df.apply()). A practical project: take a messy dataset of 1 million customer transactions (available on Kaggle). Use pandas to remove duplicates, impute missing ages, group by customer ID to compute lifetime value, and merge with a product catalog. This single project will teach you more than ten tutorials. As you learn Python for data science, also learn datetime handling—parsing dates, resampling time series, shifting lags. Pandas is your daily bread; practice it until groupby and merge become reflexes.

Chapter 6: Data Visualization – Telling Stories with Matplotlib & Seaborn

Insights are useless if you cannot communicate them. To learn Python for data science completely, you must master data visualization. Two libraries dominate: Matplotlib (flexible, low-level) and Seaborn (high-level, statistical, beautiful). Matplotlib gives you fine-grained control: plt.plot(), plt.scatter(), plt.hist(), plt.subplots(). When you learn Python for data science, start with Matplotlib to understand axes, figures, and colormaps. Then switch to Seaborn for rapid exploration: sns.histplot(), sns.boxplot(), sns.pairplot(), sns.heatmap(). Why visualize? A $230B industry runs on executive dashboards and investor decks. You need to spot outliers, distributions, and correlations visually. For example, use a scatter matrix to detect multicollinearity before building a regression model. Use a boxplot to compare salary distributions across departments. As you learn Python for data science, also learn interactive libraries: Plotly for dashboards, Altair for declarative stats, and Bokeh for real-time streaming. A capstone project: take the Titanic dataset, create a pairplot colored by survival, add a heatmap of missing values, and build a histogram of age distributions. Share your plots on GitHub. Remember: a beautiful visualization can be the difference between a rejected proposal and a funded AI initiative.

Chapter 7: Exploratory Data Analysis (EDA) – The Scientific Method

EDA is the heart of any data project. When you learn Python for data science, EDA is where you ask questions, test assumptions, and form hypotheses. The process: 1) Load data, 2) Clean and impute, 3) Univariate analysis (histograms, boxplots for single variables), 4) Bivariate analysis (scatter plots, correlation matrices), 5) Multivariate analysis (facet grids, PCA projections). Use pandas for summary stats and Seaborn for visual checks. As you learn Python for data science, adopt a checklist: check for missing values, outliers, imbalanced classes, duplicate rows, incorrect data types (e.g., dates stored as strings), and logical consistency (e.g., age cannot be negative). For outliers, use IQR or Z-score methods. For missing values, decide between deletion, mean/median imputation, or advanced methods like KNN imputation. Document every decision in a Jupyter notebook with markdown cells. A strong EDA reveals patterns: maybe sales spike on weekends, or certain zip codes have fraud. When you learn Python for data science rigorously, you will find that 80% of a model’s performance comes from EDA insights, not complex algorithms. Practice on the Housing Prices dataset from Kaggle. Perform EDA to identify which features correlate most with price. This skill alone will make you invaluable in a $230B industry.

Chapter 8: Scikit-Learn – Machine Learning Made Accessible

Now you enter the most exciting phase: machine learning. Scikit-learn is the gold standard to learn Python for data science for classical ML. It provides a consistent API: fit(), predict(), transform(), fit_transform(). Core algorithms you must know: linear regression (for forecasting), logistic regression (binary classification), decision trees and random forests (interpretable models), k-nearest neighbors (baseline), k-means clustering (unsupervised), and PCA (dimensionality reduction). When you learn Python for data science, follow this workflow: 1) Split data into train/test (train_test_split), 2) Scale features (StandardScaler or MinMaxScaler), 3) Train model, 4) Predict, 5) Evaluate (accuracy, precision, recall, F1, RMSE, R-squared). Use cross-validation (cross_val_score) to avoid overfitting. Hyperparameter tuning with GridSearchCV or RandomizedSearchCV will improve performance. A realistic project: predict customer churn for a telecom company. Clean the data, handle categorical variables with one-hot encoding, train a random forest, optimize depth and estimators, then interpret feature importance. As you learn Python for data science, also learn pipelines (Pipeline) to chain preprocessing and modeling. Scikit-learn’s documentation is exemplary—use it daily. Remember: you do not need deep learning for most business problems. Classical ML solves 90% of $230B industry use cases.

Chapter 9: Advanced Topics – SQL, Big Data, and Cloud for Python

To truly learn Python for data science at a senior level, extend beyond local datasets. First, SQL is mandatory. Most data lives in relational databases (PostgreSQL, MySQL, BigQuery). Use sqlalchemy and pandas.read_sql() to pull data directly into a DataFrame. Practice window functions (ROW_NUMBER, RANK) and joins. Second, big data: when datasets exceed memory, use Dask (parallel pandas) or PySpark (distributed computing). As you learn Python for data science, learn to partition data and use lazy evaluation. Third, the cloud: AWS (S3, SageMaker), Google Cloud (BigQuery, Vertex AI), or Azure (ML Studio). Store data in cloud storage, spin up virtual machines, and schedule automated pipelines with Apache Airflow (Python-based). Fourth, version control for data with DVC (Data Version Control). A senior data scientist in the $230B industry does not just build models—they deploy them. Learn to wrap a scikit-learn model in a Flask or FastAPI REST API, containerize with Docker, and serve via Kubernetes or a serverless function (AWS Lambda). These skills multiply your salary. When you learn Python for data science with cloud and MLOps, you become a full-stack data scientist.

Chapter 10: Deep Learning & AI – TensorFlow and PyTorch

Deep learning is not required for every job, but familiarity is expected. To learn Python for data science in 2026, understand TensorFlow and PyTorch basics. TensorFlow 2.x uses Keras API: Sequential, Dense, Conv2D, LSTM. PyTorch is preferred in research due to dynamic computation graphs. Focus on: neural network architecture (input, hidden, output layers), activation functions (ReLU, sigmoid, softmax), loss functions (categorical cross-entropy, MSE), optimizers (Adam, SGD), and callbacks (early stopping, model checkpointing). A practical project: image classification on CIFAR-10 using a convolutional neural network (CNN). Or sequence prediction for stock prices using an LSTM. When you learn Python for data science for deep learning, use Google Colab (free GPU) or Kaggle notebooks. Also learn transfer learning: use pretrained models (ResNet, BERT) to solve tasks with limited data. But be warned: deep learning is data-hungry and compute-intensive. The $230B industry uses it for computer vision, NLP, and recommendation systems. However, start with classical ML. Once comfortable, spend 2-3 months on deep learning specialization by Andrew Ng (uses Python). That will give you credibility.

Chapter 11: Real-World Portfolio Projects to Showcase Your Skills

Theory means nothing without proof. To learn Python for data science effectively, build 3–5 portfolio projects. They should demonstrate EDA, visualization, feature engineering, modeling, and evaluation. Publish code on GitHub with a detailed README and a live demo via Streamlit or Hugging Face Spaces. Example project 1: Customer Segmentation – use k-means on retail transaction data, visualize clusters with PCA, and build a Streamlit app that inputs customer features and outputs segment. Example project 2: House Price Prediction – scrape Zillow data (with permission), clean outliers, engineer features like price per square foot, train a random forest, and serve predictions via FastAPI. Example project 3: Real-time Sentiment Analysis – use Tweepy to collect tweets, apply a pretrained BERT model (Hugging Face Transformers), and plot sentiment over time. As you learn Python for data science, each project should solve a real problem. Also contribute to open source: fix a documentation bug in pandas or add a test to scikit-learn. Recruiters in the $230B industry actively scan GitHub. Write clean, documented code with type hints (def predict_price(features: dict) -> float:). A strong portfolio will get you interviews even without a formal degree.

Chapter 12: Career Pathways – From Learner to $230B Industry Professional

You have the skills. Now, how do you monetize them? After you learn Python for data science, consider these roles: Data Analyst (entry, focus on SQL and pandas), Data Scientist (mid-level, ML + stats), Machine Learning Engineer (deployment + MLOps), Data Engineer (pipelines + Spark), AI Researcher (PhD often required). Salaries in the $230B industry range from $80k (junior analyst) to $250k+ (senior ML engineer at FAANG). To land a job, follow this plan: 1) Earn a certification – IBM Data Science Professional Certificate or Google Advanced Data Analytics (both Python-heavy). 2) Network on LinkedIn – share your projects, write posts about what you learn Python for data science. 3) Practice interview questions – SQL leetcode, pandas challenges (StrataScratch), ML system design. 4) Apply to 100+ jobs, but tailor each resume to highlight Python projects. 5) Prepare a take-home challenge – many companies will ask you to analyze a dataset. Also consider freelance on Upwork or Toptal to gain experience. Finally, never stop learning. The $230B industry evolves weekly: new libraries (Polars, Ibis), LLMs (LangChain, LlamaIndex), and MLOps tools (MLflow, Weights & Biases). When you learn Python for data science, you commit to lifelong learning. Join communities like Kaggle, DataTalks.Club, and PyData. Attend conferences (even virtual). The roadmap is long, but the destination is rewarding.

Conclusion: Your First Step Today

The $230 billion data science industry is not a distant future—it is 2026, which is less than two years away. Every month you delay, the barrier to entry rises slightly higher. But here is the good news: you do not need a PhD or ten years of experience. You just need a systematic plan and consistent effort. To learn Python for data science, open your terminal tonight and install Anaconda. Tomorrow, write your first print("Hello, Data Science"). Within two weeks, you will be slicing NumPy arrays. Within two months, you will be building random forests. Within six months, you will have a portfolio that impresses hiring managers. The $230B industry is hungry for diverse, curious, persistent people—people like you. So bookmark this roadmap, share it with a friend, and begin. The only bad pace is standing still. Now go learn Python for data science and claim your place in the data revolution.

Final Call to Action:

Ready to accelerate? Download our free 2026 Python for Data Science Checklist (link in bio). Join our newsletter for weekly coding challenges. And remember: every expert once struggled with their first KeyError. Keep coding. The $230B industry is waiting.

- Python for Everyday Robotics: From Roomba to Self-Driving Cars in 2026

2. How effectively can I build real applications using just my smartphone or tablet?

3. The Best AI Tools for Students: Study Smarter, not Harder