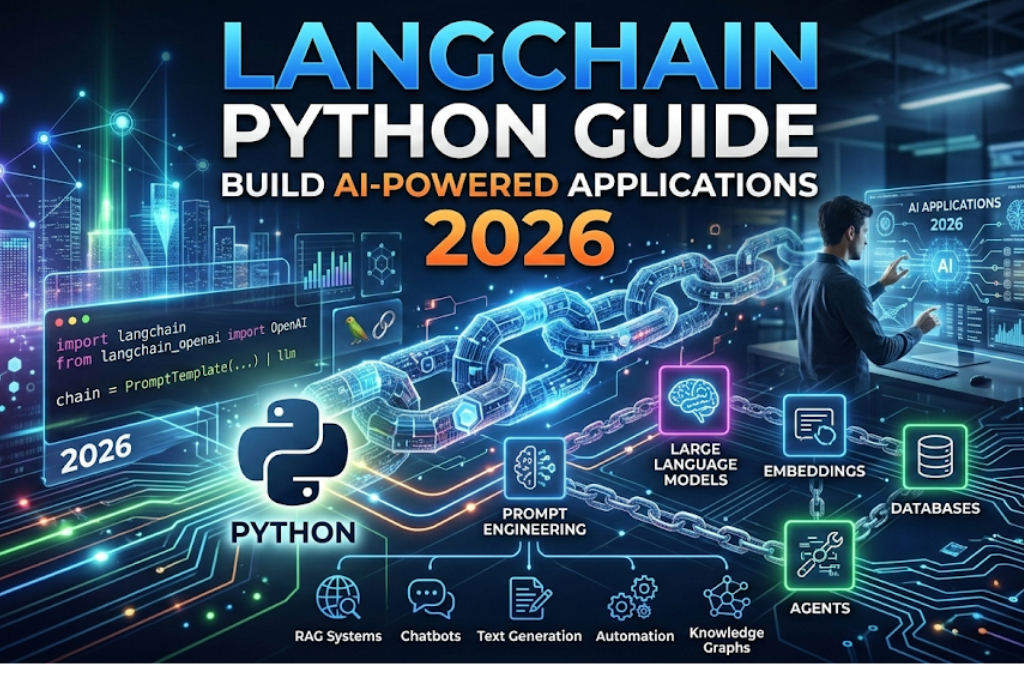

Introduction: Why Python Remains the Undisputed King of AI Development

Remember when critics declared Python’s reign was over? When faster languages like Rust and Go were supposed to render it obsolete for serious computing tasks? Then the Large Language Model (LLM) revolution happened—and Python didn’t just survive; it exploded back into the spotlight with unprecedented force.

Today, Python powers the most sophisticated AI systems being built by startups and enterprises alike. According to the 2025 Stack Overflow Developer Survey, Python adoption for AI jobs surged by 7% from 2024 to 2025, making it the most sought-after programming language for artificial intelligence positions. But what’s driving this remarkable resurgence?

The answer lies in Python’s unique position at the intersection of accessibility and power. Its intuitive syntax makes it the language of choice for researchers rapidly prototyping new ideas. Its massive ecosystem of specialized libraries—from Hugging Face’s Transformers to LangChain to PyTorch—provides battle-tested components for every stage of LLM development. And its ability to seamlessly integrate with high-performance C/C++ code means you never sacrifice speed when you need it.

In this comprehensive guide, you’ll learn exactly how to harness Python’s capabilities to build, deploy, and scale LLM-powered applications in 2026. Whether you’re building your first AI chatbot or architecting enterprise-grade agent systems, this article will give you the practical knowledge and battle-tested patterns you need to succeed.

Part 1: The Evolution of Python in the LLM Era

From Stagnation to Resurgence: A Language Reborn

For years, Python faced legitimate criticism. Its dynamic typing led to runtime errors that compiled languages catch at build time. Its Global Interpreter Lock (GIL) limited true parallelism. Memory overhead made it less than ideal for resource-constrained environments.

Then came the transformer architecture and the realization that raw execution speed matters less than development velocity when you’re exploring the frontiers of AI. The problems LLM developers needed to solve weren’t about shaving microseconds off loop iterations—they were about orchestrating complex workflows, integrating diverse data sources, and rapidly iterating on prompt strategies.

Python’s strengths suddenly became exactly what the AI revolution needed:

- Rapid prototyping allowed researchers to test hypotheses in hours instead of days

- Rich ecosystem provided pre-built components for every imaginable task

- Glue language capabilities made it easy to connect specialized tools and services

- Accessible syntax lowered the barrier to entry for the wave of new AI practitioners

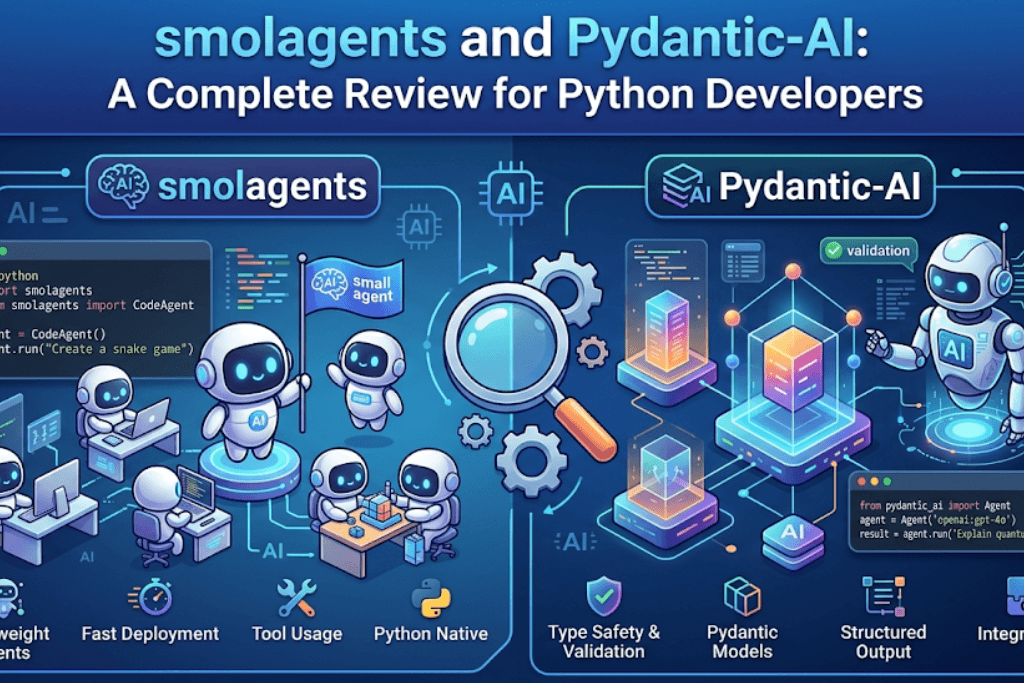

The 2026 AI Developer’s Toolkit: Essential Python Libraries

Today’s LLM developer has access to an unprecedented collection of Python libraries. Here’s what you need to know about the most important ones:

| Library | Primary Use Case | Key Features |

|---|---|---|

| Transformers (Hugging Face) | Pre-trained models, fine-tuning | 100,000+ models, unified API, thousands of pretrained options |

| LangChain | LLM application orchestration | Prompt chaining, memory management, external data integration |

| PyTorch | Deep learning, custom model training | Dynamic computation graphs, production-ready, research-friendly |

| DSPy | Production LLM applications | Declarative programming, automatic prompt optimization, model-agnostic |

| Pydantic AI | Type-safe agent development | Structured outputs, automatic validation, dependency injection |

| Llama.cpp (via Python bindings) | Local model inference | CPU/GPU hybrid execution, GGUF format support, efficient quantization |

The landscape has matured significantly. Where developers once had to cobble together solutions from raw API calls, today’s frameworks provide production-ready patterns that handle everything from type safety to observability.

Part 2: Core LLM Development Patterns with Python

Pattern 1: Prompt Engineering at Scale

Prompt engineering remains the most accessible way to improve LLM performance, but in 2026, it’s no longer about manual trial and error. Modern Python frameworks bring systematic approaches to prompt optimization.

The Old Way:

# Hardcoded prompts scattered throughout your codebase

response = openai.ChatCompletion.create(

messages=[{"role": "system", "content": "You are a helpful assistant."}]

)The 2026 Way with DSPy:

import dspy

from dspy import ChainOfThought, Predict

class SentimentAnalyzer(dspy.Signature):

"""Analyze the sentiment of the provided text."""

text: str = dspy.InputField()

sentiment: int = dspy.OutputField(desc="1=negative, 2=neutral, 3=positive")

# Create a module with automatic prompt optimization

analyzer = ChainOfThought(SentimentAnalyzer)

result = analyzer(text="I love this product!")

print(f"Sentiment: {result.sentiment}") # Returns structured integerDSPy’s declarative approach allows you to focus on what you want rather than how to prompt for it. The framework handles prompt construction, optimization, and model-specific formatting automatically.

Production Best Practices for Prompt Management:

- Centralize prompt templates in configuration files or dedicated modules rather than hardcoding them

- Version control your prompts alongside your code—they’re as important as any algorithm

- Use prompt optimization frameworks like DSPy to systematically improve performance rather than guessing

- Implement A/B testing for prompt changes in production environments

Pattern 2: Structured Data Extraction

Structured data extraction represents what many experts call “the most economically valuable application of LLMs today”. The ability to convert messy, unstructured text into clean, typed data structures opens automation possibilities across every industry.

Using Pydantic for Type-Safe Extraction:

Pydantic AI has emerged as the leading framework for this pattern, offering FastAPI-style type safety for LLM interactions:

from pydantic import BaseModel, Field

from pydantic_ai import Agent

class ContractInfo(BaseModel):

"""Extracted information from a contract document."""

parties: list[str] = Field(description="All parties named in the contract")

effective_date: str = Field(description="Contract effective date in YYYY-MM-DD format")

termination_clause: str = Field(description="Summary of termination provisions")

governing_law: str = Field(description="Jurisdiction governing the contract")

agent = Agent(

"openai:gpt-4.1",

output_type=ContractInfo,

instructions="Extract contract information precisely. If a field is missing, indicate that explicitly."

)

# Automatic validation and retry on failure

result = agent.run_sync("""This Agreement between Acme Inc. and Beta Corp...

Effective as of January 15, 2025...""")

print(f"Parties: {result.output.parties}") # Type-safe access with IDE autocompleteWhy This Pattern Matters:

The combination of Pydantic’s validation and LLM flexibility creates a powerful tool for:

- Invoice processing – Extract line items, totals, and vendor information

- Resume parsing – Convert unstructured CVs into standardized candidate profiles

- Legal document analysis – Extract clauses, dates, and obligations from contracts

- Customer support ticket classification – Convert free-text tickets into structured issue reports

Pattern 3: Retrieval-Augmented Generation (RAG)

RAG remains the standard solution for grounding LLM responses in your specific data while reducing hallucinations. In 2026, the implementation has matured significantly beyond simple vector search.

Modern RAG Architecture in Python:

from langchain.embeddings import OpenAIEmbeddings

from langchain.vectorstores import Chroma

from langchain.chains import RetrievalQA

from langchain.chat_models import ChatOpenAI

# 1. Create embeddings from your documents

embeddings = OpenAIEmbeddings(model="text-embedding-3-small")

vectorstore = Chroma.from_documents(

documents=your_documents,

embedding=embeddings

)

# 2. Build retrieval chain with advanced features

qa_chain = RetrievalQA.from_chain_type(

llm=ChatOpenAI(model="gpt-4.1"),

retriever=vectorstore.as_retriever(

search_type="mmr", # Maximum Marginal Relevance for diversity

search_kwargs={"k": 5, "fetch_k": 20}

),

return_source_documents=True,

chain_type="map_reduce" # For long document handling

)

# 3. Query with confidence

response = qa_chain("What does our Q3 policy say about expense approvals?")Key RAG Insights for 2026:

- Hybrid search (combining vector similarity with keyword matching) often outperforms pure vector search

- Long context models haven’t killed RAG—they’ve made it more powerful by allowing larger retrieved chunks

- Tool-calling approaches where the LLM decides when and what to retrieve are increasingly replacing single-shot RAG

Pattern 4: Agentic Systems with Tool Use

The most exciting development in 2026 is the maturation of LLM agents—systems that can reason, plan, and take actions by calling external tools.

Building Agents with Pydantic AI:

Pydantic AI provides a clean, type-safe approach to agent development:

from pydantic_ai import Agent, RunContext

import requests

class DatabaseDeps:

"""Dependencies injected into agent tools."""

def __init__(self, connection_string: str):

self.conn_string = connection_string

agent = Agent(

"google-gla:gemini-2.5-flash",

deps_type=DatabaseDeps,

instructions="You help users query the sales database."

)

@agent.tool

async def query_sales(ctx: RunContext[DatabaseDeps], date_range: str) -> list[dict]:

"""Query sales data for the specified date range."""

# Actual database logic here

results = await fetch_sales(ctx.deps.conn_string, date_range)

return results

# Usage with dependency injection

deps = DatabaseDeps("postgresql://localhost/sales")

result = await agent.run("What were our top 5 products last month?", deps=deps)The Agent Architecture Stack in 2026:

| Layer | Purpose | Python Tools |

|---|---|---|

| Orchestration | Multi-agent coordination | LangChain, AutoGen, CrewAI |

| Tool Definition | Function calling interface | Pydantic AI, DSPy tools |

| Memory Management | Conversation state | LangChain memory, custom vector stores |

| Observability | Tracing and monitoring | Phoenix, LangSmith, Weights & Biases |

Part 3: Production-Ready Architecture Patterns

Enterprise AI System Design: The Model Routing Pattern

Not every task requires your most expensive model. Enterprise AI systems in 2026 use intelligent routing to balance quality and cost.

from typing import Optional

from enum import Enum

class TaskComplexity(Enum):

SIMPLE = "simple"

COMPLEX = "complex"

REASONING = "reasoning"

class ModelRouter:

"""Intelligently route tasks to the right model."""

def __init__(self):

self.models = {

TaskComplexity.SIMPLE: "gpt-3.5-turbo", # $0.50/1M tokens

TaskComplexity.COMPLEX: "gpt-4.1", # $2.50/1M tokens

TaskComplexity.REASONING: "o4-mini" # $5.00/1M tokens

}

async def route(self, task: str, estimated_complexity: Optional[TaskComplexity] = None):

if estimated_complexity:

model = self.models[estimated_complexity]

else:

# Use a cheap model to classify complexity first

model = await self._classify_complexity(task)

return await self._call_model(model, task)Companies implementing intelligent model routing report cost savings of 70-90% compared to using their most expensive model for every request.

The Agent Security Crisis: Building Safe Systems

The recent OpenClaw security disaster—where researchers found 341 malicious skills (12% of the entire registry) including keyloggers with professional documentation—highlighted a critical truth: AI agents are only as safe as their supervision model.

The Dual LLM Pattern for Security:

class SecureAgent:

"""An agent that verifies actions before execution."""

def __init__(self):

self.action_llm = Agent("openai:gpt-4.1", tools=all_tools)

self.verifier_llm = Agent("openai:gpt-4.1", instructions="""

You are a security verifier. Review the proposed action and determine if it could:

1. Modify data without authorization

2. Access sensitive information

3. Execute commands on the host system

Respond with VERIFIED or REJECTED and explain why.

""")

async def execute_with_verification(self, user_request: str):

# Generate proposed action

proposed = await self.action_llm.run(user_request)

# Verify before execution

verification = await self.verifier_llm.run(

f"Proposed action: {proposed.output}\nUser request: {user_request}"

)

if "VERIFIED" in verification.output:

return await self._execute(proposed.output)

else:

return {"error": "Action rejected by security verification", "reason": verification.output}As security expert Nate B Jones notes, “The problem is not the agent itself but the supervision model. Developers treating AI agents like trusted colleagues instead of powerful-but-unsupervised contractors are one bad session away from losing real production work”.

Observability: You Can’t Improve What You Can’t Measure

Production LLM systems require comprehensive observability. The Phoenix platform provides automatic tracing for all LLM calls:

import phoenix as px

from phoenix.otel import register

# Initialize tracing

tracer_provider = register()

px.launch_app()

# Your DSPy or LangChain calls are automatically traced

# Including:

# - Full prompt content sent to models

# - Raw responses received

# - Token usage per call

# - Tool call trajectoriesThis level of visibility is essential for debugging, cost optimization, and understanding where your system succeeds or fails.

Part 4: The Local Model Revolution

Running Production Models on Consumer Hardware

One of 2026’s most significant developments is the ability to run powerful models on local hardware. The Flash-MoE project demonstrated running Qwen3.5-397B (397 billion parameters) on a 48GB MacBook Pro at 5.5 tokens per second using just 5.5GB of resident memory.

For Python developers, this means:

from llama_cpp import Llama

# Load a quantized model that runs on CPU

llm = Llama(

model_path="./models/mixtral-8x7b-instruct-v0.1.Q4_K_M.gguf",

n_ctx=8192, # Context window

n_threads=8, # CPU threads

n_gpu_layers=35 # Offload some layers to GPU if available

)

response = llm.create_chat_completion(

messages=[{"role": "user", "content": "Explain quantum computing simply."}],

temperature=0.7,

max_tokens=500

)Why Local Models Matter:

- Data privacy – Sensitive information never leaves your infrastructure

- Latency – No network round trips to API providers

- Cost predictability – No per-token pricing surprises

- Offline capability – AI functionality without internet access

The Open Source Model Landscape

Today’s open-weight models rival their proprietary counterparts. The DSPy framework demonstrated that through optimization, a smaller model (GPT-4.1 nano) could recover performance from 70% to 87% of GPT-4.1’s capability while costing orders of magnitude less.

Key open models to watch in 2026:

- DeepSeek V4 – 1 trillion parameters (37B active), 1M token context, $0.28/million input tokens

- Llama 3.5 family – Strong performance across sizes

- Qwen 2.5 series – Excellent multilingual and multimodal capabilities

- Kimi K2.5 – Strong reasoning, used as base for Cursor Composer 2

Part 5: Getting Started – Practical Learning Path

For Beginners: Your First Week with Python LLM Development

Day 1-2: Environment Setup

# Use Poetry for dependency management (the modern standard)

curl -sSL https://install.python-poetry.org | python3 -

poetry new my-ai-project

cd my-ai-project

poetry add openai python-dotenv pydantic

# Set up your API keys

echo "OPENAI_API_KEY=your_key_here" > .envDay 3-4: Build Your First Chatbot

from openai import OpenAI

from pydantic import BaseModel

class Response(BaseModel):

answer: str

confidence: float

client = OpenAI()

response = client.beta.chat.completions.parse(

model="gpt-4.1-mini",

messages=[

{"role": "system", "content": "You are a helpful Python tutor."},

{"role": "user", "content": "Explain decorators in Python"}

],

response_format=Response

)

print(f"Answer: {response.answer}")

print(f"Confidence: {response.confidence}")Day 5-7: Add Memory and Tools

Build on the workshop patterns from the GitHub repository, implementing:

- Rolling conversation memory

- Tool-calling for calculations or data fetching

- Basic RAG with your own documents

For Experienced Developers: Advanced Resources

- DSPy Workshop (AlixPartners, 2026) – Deep dive into production patterns

- Pydantic AI Documentation – Comprehensive examples of type-safe agents

- Simon Willison’s PyCon Workshop – Building software on LLMs with practical exercises

Part 6: Future Trends and Predictions

The OpenAI-Astral Acquisition: What It Means for Python

In March 2026, OpenAI acquired Astral, the company behind uv (126M monthly downloads) and ruff (1,000x faster than alternatives). This acquisition signals a strategic shift: model providers are now competing on developer experience, not just model capability.

For Python developers, this means:

- Tighter integration between AI coding assistants and development tooling

- Native understanding of package management, linting, and type systems in AI tools

- Potential lock-in effects as the Python toolchain becomes AI-integrated

The Pricing Race: Costs Are Plummeting

Model pricing continues to drop dramatically:

- DeepSeek V4: $0.28/million input tokens

- GPT-5.4 Mini: $0.75/million input tokens

- GPT-5.4 Nano: $0.20/million input tokens

- Gemini 3.1 Flash-Lite: $0.25/million input tokens

At these prices, the cost of LLM-powered features becomes negligible for many applications. The constraint shifts from cost to architectural feasibility.

Agentic Scaling: The Next Frontier

Nvidia’s GTC 2026 introduced “agentic scaling” as the fourth phase of AI (following pretraining, fine-tuning, and test-time scaling). This represents systems where AI agents work together, spawn sub-agents, and iterate on complex tasks autonomously.

Python’s role in this future is clear: as the orchestration language for multi-agent systems, Python will be the interface through which developers coordinate increasingly sophisticated AI workflows.

Conclusion: Your Path Forward in Python AI Development

The resurgence of Python through the LLM revolution isn’t just a trend—it’s a fundamental shift in how software is built. Python’s combination of accessibility and power makes it the ideal language for an era where the primary development activity shifts from writing algorithms to orchestrating intelligence.

Your Action Plan:

- Install the modern toolchain – Poetry, ruff, and uv for professional Python development

- Master structured extraction – Learn Pydantic and Pydantic AI for type-safe LLM interactions

- Build an agent with tools – Start with simple tool-calling, graduate to multi-agent systems

- Implement observability – Add tracing before you need it, not after something breaks

- Stay current – The field moves fast; follow frameworks like DSPy and libraries like Pydantic AI as they evolve

The most valuable skill in 2026 isn’t knowing specific model details—those change weekly. It’s understanding the patterns and architectures that make LLM systems reliable, maintainable, and safe. Python gives you the tools to master these patterns.

Now go build something amazing.